What Is an Artificial Neuron? The Complete Guide (2026)

- Mar 28

- 20 min read

Every time you ask Google to translate a sentence, every time a phone unlocks with your face, every time a doctor uses AI to spot a tumor in an X-ray — the same tiny math function sits at the heart of it all. It's called an artificial neuron. It has no size. No weight. No consciousness. But connect billions of them together, train them on enough data, and they can do things that once seemed impossible. This is the story of where that idea started — and how it quietly became one of the most important inventions in human history.

Don’t Just Read About AI — Own It. Right Here

TL;DR

An artificial neuron is a simple math function: it takes inputs, multiplies them by weights, sums them, and passes the result through an activation function to produce an output.

The idea was first introduced by Warren McCulloch and Walter Pitts in 1943 — over 80 years ago.

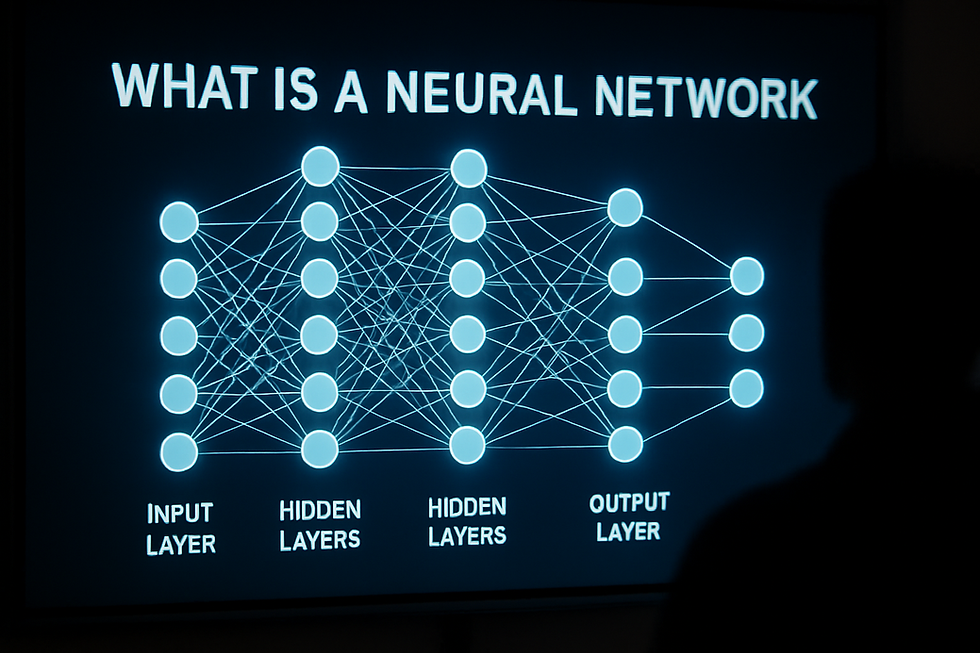

Modern neural networks connect millions or billions of artificial neurons in layers to learn patterns from data.

Three verified breakthroughs: AlexNet cracked image recognition in 2012, Google Neural Machine Translation cut translation errors by 60% in 2016, and AlphaFold solved the 50-year protein folding problem in 2020.

The global neural network market was valued at $34.52 billion USD in 2024, with projections to reach $537.81 billion by 2034.

Artificial neurons are inspired by — but fundamentally different from — biological neurons in the brain.

What is an artificial neuron?

An artificial neuron is a mathematical function modeled after a biological neuron. It receives numerical inputs, multiplies each by a weight representing its importance, sums the results, adds a bias value, and passes the total through an activation function. If the combined signal is strong enough, the neuron produces an output. Billions of these neurons, linked in layers, form the neural networks that power modern AI.

Table of Contents

1. What Is an Artificial Neuron? (Definition)

An artificial neuron is the smallest building block of a neural network. It is a mathematical function — not a physical object — designed to mimic how a biological neuron in the brain processes information.

Here is the core idea in plain terms: An artificial neuron receives numbers as inputs. It multiplies each input by a number called a weight. Weights represent how important each input is. The neuron then adds all the weighted inputs together, adds a second number called a bias, and pushes the total through a mathematical formula called an activation function. The activation function decides what the neuron's output will be.

A single artificial neuron is not powerful. It can only perform simple math. But when you connect thousands, millions, or even billions of them in layers — each layer feeding its outputs into the next — you get a neural network. Neural networks can recognize faces, translate languages, write text, and predict protein structures.

The term "artificial neuron" is used interchangeably with "node" or "unit" in technical literature. The concept draws from neuroscience but lives entirely in mathematics and computer code (Wikipedia, "Artificial neuron," updated January 2025).

2. A Brief History: From 1943 to Today

2.1 The Birth: McCulloch and Pitts (1943)

The artificial neuron was born on paper in 1943. American neurophysiologist Warren McCulloch and logician Walter Pitts published a paper titled "A Logical Calculus of the Ideas Immanent in Nervous Activity." In it, they proposed the first mathematical model of how a single neuron might work (McCulloch & Pitts, 1943).

Their model was extremely simple. It accepted binary inputs — only 1 (true) or 0 (false). It applied a threshold: if the sum of the inputs met or exceeded the threshold, the neuron fired and output 1. Otherwise, it output 0. This was called a Threshold Logic Unit.

McCulloch and Pitts did not build a learning machine. They built a theoretical tool. Their goal was to show that neurons, even in simple form, could perform basic logic operations like AND, OR, and NOT. The paper has since been cited nearly 30,000 times (Dr. Kais Dukes, LinkedIn, June 2023).

2.2 The Perceptron: Rosenblatt (1957)

Frank Rosenblatt, a psychologist at Cornell Aeronautical Laboratory, took the McCulloch-Pitts idea and made it learn. In 1957, he developed the Perceptron — the first artificial neuron that could adjust its weights based on feedback (Wikipedia, "Perceptron," updated 2025).

Unlike the McCulloch-Pitts model, Rosenblatt's perceptron could be trained. If it made a mistake, the learning rule adjusted the weights to reduce future errors. Rosenblatt even built physical hardware — the Mark I Perceptron — which used a 20×20 grid of 400 photocells connected to 512 hidden neurons and 8 output neurons (Wikipedia, "Perceptron," updated 2025).

The media went wild. The New York Times reported in 1958 that the perceptron might eventually "walk, talk, see, write, reproduce itself, and be conscious of its existence" — claims that were wildly exaggerated and set the stage for a painful backlash.

2.3 The Setback: Minsky and Papert (1969)

In 1969, AI researchers Marvin Minsky and Seymour Papert published Perceptrons: An Introduction to Computational Geometry. Through rigorous math, the book proved that a single-layer perceptron could not solve certain important problems — most famously, the XOR function (Wikipedia, "Perceptrons (book)," updated 2025).

XOR is a simple logic operation: it returns true when exactly one of two inputs is true. But a single-layer perceptron draws a straight line to separate categories. XOR cannot be separated by a straight line. It is "non-linearly separable." The perceptron simply could not learn it (Sean Trott, Substack, January 2024).

Minsky and Papert's book contributed to what became known as the "AI winter" — a period of reduced funding and enthusiasm for neural network research lasting from the early 1970s through the early 1980s. Research labs lost funding. Some researchers abandoned the field entirely (Sean Trott, 2024).

2.4 The Revival: Backpropagation (1980s)

The solution to the XOR problem — and the key to the neural network renaissance — was multi-layer networks combined with a training technique called backpropagation. This algorithm allows errors to flow backward through the network, layer by layer, adjusting weights at every level so the whole network learns together.

Paul Werbos first described the mathematical approach in 1974, but the chilling effect of the AI winter delayed his neural network publication until 1982. It was not until the mid-1980s, when David Rumelhart, Geoffrey Hinton, and Ronald Williams popularized backpropagation for multi-layer networks, that neural networks returned to mainstream AI research (Rumelhart et al., 1986).

2.5 The Deep Learning Explosion (2012–Present)

The modern era of AI began on September 30, 2012, when a neural network called AlexNet won the ImageNet competition by a massive margin. This event is covered in detail in the Case Studies section below. Since then, artificial neurons have become the backbone of nearly every major AI system on Earth.

3. How Does an Artificial Neuron Actually Work?

Step-by-Step Breakdown

Here is exactly what happens inside a single artificial neuron, in order:

Step 1 — Receive Inputs: The neuron receives numerical values. These could be pixel values from an image, word embeddings from text, or sensor readings from a device. Each input represents one piece of data.

Step 2 — Multiply by Weights: Each input is multiplied by a corresponding weight. Weights represent how important each input is to the neuron's decision. A weight of 2.0 means "this input matters twice as much." A weight of 0.1 means "this input barely matters."

Step 3 — Sum the Products: All the weighted inputs are added together. This produces a single number — the weighted sum.

Step 4 — Add the Bias: A bias is an additional number added to the weighted sum. It shifts the neuron's sensitivity up or down. Bernard Widrow introduced this concept in 1960 (Wikipedia, "Artificial neuron," 2025).

Step 5 — Pass Through the Activation Function: The result goes through a mathematical function called the activation function. This function decides what the neuron actually outputs. It introduces non-linearity — the ability to learn complex, curved patterns rather than just straight lines.

Step 6 — Produce the Output: The neuron produces a single output number. This output becomes an input for neurons in the next layer.

Worked Example

Imagine a neuron with two inputs: temperature (30°C) and humidity (80%).

Input | Value | Weight | Weighted Value |

Temperature | 30 | 0.6 | 18.0 |

Humidity | 80 | 0.4 | 32.0 |

Weighted Sum | — | — | 50.0 |

+ Bias | — | — | +5.0 |

Total | — | — | 55.0 |

The activation function (ReLU) receives 55. Since 55 is positive, it outputs 55. The neuron fires and sends 55 to the next layer. During training, if this output leads to a wrong prediction, backpropagation will adjust the weights and bias to improve future accuracy.

4. Activation Functions: The Decision Makers

The activation function is what makes artificial neurons capable of learning complex patterns. Without it, a neural network — no matter how many layers it had — would only learn straight-line relationships.

Here is how the most important activation functions compare:

Activation Function | Output Range | Best Used For | Main Weakness |

Step (Threshold) | 0 or 1 | Historical; basic logic | Cannot learn gradual patterns |

Sigmoid | 0 to 1 | Binary classification (output layer) | Vanishing gradient in deep networks |

Tanh | −1 to 1 | Hidden layers in shallow networks | Vanishing gradient in deep networks |

ReLU | 0 to ∞ | Most deep learning tasks | "Dying ReLU" — some neurons stop learning |

Leaky ReLU | −∞ to ∞ | Deep networks where dying neurons are a risk | Requires tuning of leak parameter |

Sources: Google for Developers, "Activation Functions"; GeeksforGeeks, "Tanh vs. Sigmoid vs. ReLU," November 2025; EITCA Academy, May 2024

Why ReLU Dominates

ReLU (Rectified Linear Unit) is the most widely used activation function in deep learning today. The formula is remarkably simple: if the input is positive, output it unchanged; if negative, output zero.

AlexNet — the network that launched the deep learning revolution in 2012 — used ReLU. The original paper by Krizhevsky, Sutskever, and Hinton reported that deep networks with ReLU trained "several times faster" than equivalent networks using the older tanh function (Krizhevsky et al., NeurIPS, 2012).

ReLU avoids the vanishing gradient problem that plagued sigmoid and tanh in deep networks. When gradients shrink to near-zero during training, earlier layers stop learning. ReLU keeps gradients flowing because it has a constant gradient of 1 for all positive inputs (Google for Developers).

5. Biological Neurons vs. Artificial Neurons

Artificial neurons were inspired by biological neurons — but they are not copies. The differences are significant and often misunderstood.

Feature | Biological Neuron | Artificial Neuron |

Structure | Dendrites, cell body (soma), axon, synapses | Inputs, weights, bias, activation function, output |

Signal type | Electrochemical (action potentials) | Numerical (floating-point numbers) |

Count | ~86 billion in human brain | Thousands to billions (varies by network) |

Learning | Continuous, real-time (synaptic plasticity) | Only during training; fixed during use |

Energy | ~20 watts for entire human brain | GPUs consuming thousands of watts |

Adaptability | Learns instantly from new experience | Cannot learn without retraining |

Sources: GeeksforGeeks, "Difference between ANN and BNN," July 2025; Sophos, "Man vs Machine," 2017; Milvus AI Quick Reference

The human brain has approximately 86 billion neurons, each forming up to 10,000 synaptic connections — roughly 100 trillion connections total. The most advanced artificial neural networks today have tens of billions of parameters. But these parameters are not equivalent to biological synapses. A biological neuron processes information through complex biochemistry involving neurotransmitters, ion channels, and electrochemical gradients. An artificial neuron performs a weighted sum.

This distinction matters. Artificial neurons are powerful tools for pattern recognition. But they do not "think," "understand," or "feel" in any meaningful sense. They compute (Richard Nagyfi, Medium, 2018).

6. Case Studies: Where Artificial Neurons Changed Everything

Case Study 1: AlexNet Breaks Image Recognition (2012)

Detail | Information |

Who | Alex Krizhevsky, Ilya Sutskever, Geoffrey Hinton — University of Toronto |

What | A convolutional neural network (CNN) that won the ImageNet competition |

When | September 30, 2012 |

Result | Top-5 error rate of 15.3% — a 9.8 percentage point lead over the next best system (26.2%) |

Before AlexNet, computers struggled to recognize objects in photos. Researchers spent months manually designing algorithms to detect edges, corners, and textures. AlexNet learned these features automatically from 1.2 million training images across 1,000 categories (Krizhevsky et al., 2012; Pinecone, "AlexNet and ImageNet").

AlexNet used five convolutional layers and three fully connected layers — approximately 60 million parameters total. It was one of the first networks to demonstrate that ReLU activation functions and GPU-based training could make deep learning practical at scale.

The impact was immediate. Google, Facebook, and other tech companies shifted billions of dollars toward deep learning research. NVIDIA began redesigning its GPUs for AI workloads. Google acquired DeepMind in 2014 and released TensorFlow in 2015 — both direct consequences of the deep learning revolution AlexNet ignited (DejanAI, 2025; MIT Technology Review, September 2014).

Case Study 2: Google Neural Machine Translation (2016)

Detail | Information |

Who | Google Brain team |

What | Neural network (GNMT) replacing phrase-based translation in Google Translate |

When | November 2016 |

Result | Translation errors reduced by 55–85% across major language pairs |

Google Translate had used statistical, phrase-by-phrase translation since 2007. It worked by breaking sentences into small chunks and translating each independently. The results were often awkward — especially for languages with very different word orders, like Chinese and English.

GNMT replaced this with a deep LSTM neural network: 8 encoder layers and 8 decoder layers, with over 160 million parameters. Instead of translating word by word, it processed entire sentences at once, capturing context across the full sentence (Wu et al., arXiv, 2016; Wikipedia, "Google Neural Machine Translation," 2025).

On its launch day, GNMT handled approximately 18 million Chinese-to-English translations. Human evaluators rated the system at about 4.5 out of 6 for quality — compared to roughly 3.5 for the old phrase-based system (NPR, October 2016; Google Research Blog, September 2016).

Google later replaced GNMT with transformer-based models by 2020, but GNMT remains one of the most important real-world demonstrations of artificial neurons operating at production scale.

Case Study 3: AlphaFold Solves Protein Folding (2020–2024)

Detail | Information |

Who | Google DeepMind — Demis Hassabis, John Jumper, and team |

What | A deep learning system predicting 3D protein structures from amino acid sequences |

When | 2020 (AlphaFold 2); May 2024 (AlphaFold 3); October 2024 (Nobel Prize) |

Result | 200+ million protein structures predicted; 3 million researchers using it in 190+ countries |

Proteins are the molecular machines of life. Each folds into a specific 3D shape that determines its function. Before AlphaFold, determining even one protein's structure could take a scientist years and cost hundreds of thousands of dollars.

AlphaFold 2 won the 14th Critical Assessment of protein Structure Prediction (CASP14) in 2020 with a median backbone error of less than 1 angstrom — roughly the size of a single atom. This was 3 times more accurate than the next best system and roughly matched expensive experimental methods (Jumper et al., Nature, 2021; Google DeepMind, "AlphaFold").

In July 2022, DeepMind and the European Bioinformatics Institute uploaded predicted structures for approximately 200 million proteins — nearly every known protein on Earth — to a free database. By 2024, the count reached 214 million (Wikipedia, "AlphaFold," 2025).

AlphaFold 3, launched May 2024, extended predictions to DNA, RNA, and other molecules. It showed at least a 50% improvement in accuracy for protein-molecule interactions over existing methods (Google DeepMind Blog, May 2024).

In October 2024, Demis Hassabis and John Jumper were awarded the Nobel Prize in Chemistry for AlphaFold — a direct testament to the power of artificial neurons in solving fundamental scientific problems (Wikipedia, "AlphaFold," 2025; Lasker Foundation, 2023).

7. The Neural Network Market: By the Numbers

Artificial neurons are the foundation of a rapidly growing global industry.

Metric | Value | Year | Source |

Global neural network market size | $34.52 billion USD | 2024 | Precedence Research, 2025 |

Projected market size | $537.81 billion USD | 2034 | Precedence Research, 2025 |

Market CAGR (2025–2034) | 31.60% | — | Precedence Research, 2025 |

North America's market share | ~40% | 2024 | Precedence Research, 2025 |

CNN segment share of market | ~35% | 2024 | Precedence Research, 2025 |

Image recognition app share | ~30% | 2024 | Precedence Research, 2025 |

Daily users of Google's ANN services | 1.2 billion | 2024 | Google AI Report, 2024 |

IT & telecom end-user share | ~33% | 2024 | Precedence Research, 2025 |

Healthcare, finance, automotive, and telecommunications are the fastest-growing sectors. North America leads in total spending, but the Asia-Pacific region is expected to see the fastest growth through 2034 (Precedence Research, 2025).

8. Pros and Cons of Artificial Neurons

Pros

Pattern recognition at scale: Neural networks find patterns in vast, complex datasets that humans would never spot — from medical imaging to financial fraud detection.

Automatic feature learning: Unlike older AI methods, neural networks learn relevant features directly from raw data. No manual engineering required.

Scalability: Adding more neurons and layers increases a network's capacity to handle harder problems.

Versatility: The same fundamental architecture works across image recognition, language processing, game playing, and scientific research.

Speed at inference: Once trained, neural networks can make predictions in microseconds.

Cons

Data hungry: Networks typically need massive datasets to train well. Small datasets often lead to poor performance or overfitting.

Computational cost: Training large networks requires powerful GPUs or TPUs and significant electricity. Training GPT-3 alone consumed an estimated 1,287 MWh of energy (Patterson et al., Google, 2022).

Black box problem: It is often difficult to understand why a neural network made a specific decision. This limits trust in high-stakes fields like medicine and law.

Overfitting risk: Networks can memorize training data instead of learning general patterns, resulting in poor performance on new, unseen data.

Bias amplification: If training data contains biases, the network learns and reproduces them — sometimes at massive scale.

9. Myths vs. Facts

Myth | Fact |

"Artificial neurons think like human brains." | Artificial neurons perform weighted math. They do not think, reason, or understand. They are mathematical functions (GeeksforGeeks, July 2025). |

"A single artificial neuron can solve complex problems." | A single neuron only performs a simple calculation. Complex problem-solving requires networks of neurons in layers (Wikipedia, "Artificial neuron," 2025). |

"Neural networks have always been successful." | They went through a major "AI winter" from the 1970s to early 1980s after Minsky and Papert highlighted their limitations (Sean Trott, January 2024). |

"Biological and artificial neurons work the same way." | Biological neurons use electrochemical signals and learn continuously in real time. Artificial neurons use numerical math and only learn during training (Richard Nagyfi, Medium, 2018). |

"More neurons always means better performance." | Architecture, training data quality, and activation functions matter as much as size. A poorly designed large network can underperform a well-designed small one (Google for Developers). |

10. Pitfalls and Risks

The Vanishing Gradient Problem

In deep networks using sigmoid or tanh activation functions, gradients shrink to near-zero as they flow backward through many layers. This means the earliest layers effectively stop learning. ReLU was developed largely to address this issue (EITCA Academy, May 2024).

The Dying ReLU Problem

If a ReLU neuron receives only negative inputs during training, its output becomes permanently zero and it stops learning forever. This is called a "dead" neuron. Variants like Leaky ReLU and ELU were created specifically to prevent this (GeeksforGeeks, November 2025).

When a network memorizes training data rather than learning general patterns, it performs brilliantly on training examples but poorly on new data. Techniques like dropout (randomly disabling neurons during training) and data augmentation help prevent this.

Adversarial Attacks

Neural networks can be fooled by tiny, carefully crafted changes to input data. A slight pixel-level alteration to an image — invisible to human eyes — can cause a network to completely misclassify it. This poses serious concerns for self-driving cars and security systems (Richard Nagyfi, Medium, 2018).

11. Future Outlook

Neuromorphic Computing

Scientists are building computer chips that mimic the physical structure of biological brains. Intel's Loihi chip and IBM's neuromorphic research aim to process information using hardware-based artificial neurons. These chips promise dramatically lower energy consumption compared to traditional GPU-based neural networks.

Transformer Architectures

Since 2017, the transformer architecture has largely replaced older designs like LSTMs for language tasks. Transformers use a mechanism called attention that allows neurons to focus on the most relevant parts of the input. Models like GPT and BERT are built on transformers and have transformed natural language processing.

Scaling Laws

Research from OpenAI (Kaplan et al., 2020) demonstrated that neural network performance improves predictably as you increase parameters, training data, and compute. This finding has driven the race to build ever-larger models — with consequences for both capability and energy consumption.

AlphaFold's success has opened the door for neural networks to accelerate drug development. Isomorphic Labs, a DeepMind subsidiary, is already using AlphaFold 3 to design novel molecules for treating diseases (Google DeepMind Blog, May 2024). This represents one of the most promising near-term applications of artificial neurons in science.

12. FAQ

Q1: What is an artificial neuron in simple terms?

An artificial neuron is a math function that takes in numbers, multiplies them by importance values called weights, adds them up, and uses a formula called an activation function to decide its output. It is the basic unit of a neural network.

Q2: Who invented the artificial neuron?

Warren McCulloch and Walter Pitts first proposed the concept in 1943 in their paper "A Logical Calculus of the Ideas Immanent in Nervous Activity." Frank Rosenblatt later built the first learning version — the Perceptron — in 1957.

Q3: How is an artificial neuron different from a biological neuron?

A biological neuron uses electrochemical signals and learns continuously in real time. An artificial neuron performs numerical calculations and only learns during a defined training process. They share conceptual similarity but are fundamentally different systems.

Q4: What does an activation function do?

An activation function transforms a neuron's weighted sum into its final output. It introduces non-linearity — the ability to learn complex, curved patterns rather than only straight lines. ReLU, sigmoid, and tanh are the most common examples.

Q5: Why is ReLU the most popular activation function?

ReLU is simple to compute, avoids the vanishing gradient problem that slows training in deep networks, and has proven effective across a wide range of tasks. AlexNet popularized its use in 2012, and it has remained dominant ever since.

Q6: What is a neural network?

A neural network is a collection of artificial neurons arranged in layers. Each layer's outputs feed into the next layer's inputs. The network learns by adjusting the weights between neurons during a training process called backpropagation.

Q7: What caused the AI winter of the 1970s–1980s?

Minsky and Papert's 1969 book Perceptrons proved that single-layer neural networks could not solve certain critical problems. Combined with limited computing power and resulting funding cuts, this led to over a decade of reduced interest in neural network research.

Q8: How did backpropagation revive neural networks?

Backpropagation calculates how much each weight contributed to the network's prediction error, then adjusts all weights simultaneously. It made training multi-layer networks feasible for the first time, enabling deep learning in the 1980s.

Q9: What is the vanishing gradient problem?

In deep networks, the gradients used to update weights can shrink to near-zero as they flow through many layers. This causes earlier layers to effectively stop learning. ReLU and other modern activation functions largely solve this problem.

Q10: How many artificial neurons are in a modern AI system?

It varies enormously. A simple image classifier might use thousands of neurons. A large language model like GPT-3 has 175 billion parameters — each representing a weight within the network's neurons and connections.

Q11: Can a single artificial neuron solve problems on its own?

No. A single neuron can only compute a simple weighted sum. All the impressive capabilities of AI — image recognition, language understanding, game playing — emerge from networks of many neurons working together in layers.

Q12: What industries use artificial neurons the most?

As of 2024, IT and telecommunications held the largest share (~33%) of neural network end-users, followed by healthcare and automotive. Image recognition and computer vision account for roughly 30% of neural network applications (Precedence Research, 2025).

Q13: Are artificial neurons energy-efficient?

Training large neural networks is energy-intensive. But inference — using a trained model to make predictions — is relatively fast and efficient. Neuromorphic computing chips aim to make both training and inference far more energy-efficient in the coming years.

Q14: What is the bias in an artificial neuron?

Bias is a number added to the weighted sum before the activation function processes it. It shifts the neuron's decision boundary, making it more or less likely to fire regardless of input values. Bernard Widrow introduced this concept in 1960.

Q15: Will artificial neurons eventually match biological brains?

This remains one of the most debated questions in AI. Current artificial neurons are optimized for specific tasks. Biological brains are vastly more complex, adaptable, and energy-efficient. Closing this gap is one of the biggest long-term challenges in AI research.

Key Takeaways

An artificial neuron is a mathematical function that takes inputs, applies weights, adds a bias, and passes the result through an activation function to produce an output.

The concept originated with McCulloch and Pitts in 1943. It evolved through the Perceptron (1957), the AI winter (1970s–1980s), backpropagation (1980s), and the deep learning explosion (2012–present).

ReLU is the dominant activation function in modern deep learning because it avoids the vanishing gradient problem and is computationally efficient.

Three landmark verified case studies — AlexNet (2012), Google Neural Machine Translation (2016), and AlphaFold (2020) — demonstrate the transformative power of artificial neurons.

The global neural network market was $34.52 billion USD in 2024 and is projected to reach $537.81 billion by 2034 at a 31.60% CAGR.

Artificial neurons are not biological neurons. They are mathematically inspired by them but operate through fundamentally different mechanisms.

Key challenges include the vanishing gradient problem, dying neurons, overfitting, adversarial attacks, and the energy cost of training.

The near-term future points toward neuromorphic computing, transformer architectures, and AI-accelerated scientific discovery in drug design and beyond.

Actionable Next Steps

Solidify the fundamentals. Re-read this guide and make sure you can explain — in plain English — what a weight, bias, and activation function do. Understanding these three concepts is the entry point to everything else.

Take a free online course. Google's Machine Learning Crash Course (developers.google.com/machine-learning/crash-course) teaches neural network fundamentals with hands-on exercises at zero cost.

Build a simple network. Use Python libraries like TensorFlow or PyTorch to create a single-layer network that classifies simple data. Both are free, well-documented, and have beginner tutorials.

Follow live research. Read Google DeepMind's blog (deepmind.google) and OpenAI's research page (openai.com/research) for the latest breakthroughs powered by artificial neurons.

Learn about AI ethics. Before going deeper technically, understand the risks. IBM's AI Fairness 360 toolkit (github.com/Trusted-AI/AI-Fairness-360) is a solid starting point for understanding bias in neural networks.

Glossary

Term | Definition |

A mathematical formula applied to a neuron's weighted sum to produce its output. Introduces non-linearity. | |

Artificial Neuron | A mathematical function that processes inputs using weights, a bias, and an activation function. The basic unit of a neural network. |

A training algorithm that calculates each weight's contribution to the network's error, then adjusts weights to reduce future errors. | |

Bias (neuron) | A number added to the weighted sum to shift the neuron's decision threshold. |

A neural network type specialized for processing grid-like data, especially images. | |

A branch of AI that uses neural networks with many layers to learn patterns from large datasets. | |

Gradient | A measure of how much a small change in a weight affects the network's error. Used during training to guide updates. |

Long Short-Term Memory — a neural network layer designed to learn from sequences of data like text or time series. | |

A collection of artificial neurons arranged in layers, designed to learn patterns from data. | |

When a network memorizes training data instead of learning general patterns, causing poor performance on new data. | |

The first artificial neuron capable of learning, developed by Frank Rosenblatt in 1957. | |

Rectified Linear Unit — an activation function that outputs the input if positive, or zero if negative. Most widely used in deep learning. | |

An S-shaped activation function mapping inputs to values between 0 and 1. Used for binary classification. | |

A problem in deep networks where gradients shrink to near-zero during training, causing earlier layers to stop learning. | |

A number representing the importance of an input to a neuron's calculation. Adjusted during training. |

Sources & References

McCulloch, W. & Pitts, W. (1943). "A Logical Calculus of the Ideas Immanent in Nervous Activity." Bulletin of Mathematical Biophysics, 5(4), 115–133. https://link.springer.com/article/10.1007/BF02459937

Wikipedia. "Artificial neuron." Updated January 2025. https://en.wikipedia.org/wiki/Artificial_neuron

Wikipedia. "Perceptron." Updated January 2025. https://en.wikipedia.org/wiki/Perceptron

Wikipedia. "Perceptrons (book)." Updated January 2025. https://en.wikipedia.org/wiki/Perceptrons_(book)

Wikipedia. "AlphaFold." Updated January 2025. https://en.wikipedia.org/wiki/AlphaFold

Wikipedia. "Google Neural Machine Translation." Updated 2025. https://en.wikipedia.org/wiki/Google_Neural_Machine_Translation

Dr. Kais Dukes. "McCulloch-Pitts: The First Computational Neuron." LinkedIn, June 2023. https://www.linkedin.com/pulse/mcculloch-pitts-first-computational-neuron-dr-kais-dukes

Sean Trott. "Perceptrons, XOR, and the first 'AI winter'." Substack, January 2024. https://seantrott.substack.com/p/perceptrons-xor-and-the-first-ai

Krizhevsky, A., Sutskever, I. & Hinton, G. (2012). "ImageNet Classification with Deep Convolutional Neural Networks." NeurIPS 2012. https://proceedings.neurips.cc/paper/4824-imagenet-classification-with-deep-convolutional-neural-networks.pdf

Pinecone. "AlexNet and ImageNet: The Birth of Deep Learning." https://www.pinecone.io/learn/series/image-search/imagenet/

Wu, Y. et al. (2016). "Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation." arXiv:1609.08144. https://arxiv.org/abs/1609.08144

Google Research Blog. "A Neural Network for Machine Translation, at Production Scale." September 2016. https://ai.googleblog.com/2016/09/a-neural-network-for-machine.html

NPR. "Google Announces Improvements to Translation System." October 3, 2016. https://www.npr.org/2016/10/03/496442106/google-announces-improvements-to-translation-system

IEEE Spectrum. "Google Translate Gets a Deep-Learning Upgrade." 2016. https://spectrum.ieee.org/google-translate-gets-a-deep-learning-upgrade

Jumper, J. et al. (2021). "Highly accurate protein structure prediction with AlphaFold." Nature, 596, 583–589. https://www.nature.com/articles/s41586-021-03819-2

Google DeepMind Blog. "AlphaFold 3 predicts the structure and interactions of all of life's molecules." May 8, 2024. https://blog.google/technology/ai/google-deepmind-isomorphic-alphafold-3-ai-model/

Google DeepMind. "AlphaFold." https://deepmind.google/science/alphafold/

Lasker Foundation. "AlphaFold — for predicting protein structures." 2023. https://laskerfoundation.org/winners/alphafold-a-technology-for-predicting-protein-structures/

Precedence Research. "Neural Network Market." October 2025. https://www.precedenceresearch.com/neural-network-market

Business Research Insights. "Artificial Neural Networks Market." October 2025. https://www.businessresearchinsights.com/market-reports/artificial-neural-networks-market-112504

Google for Developers. "Neural Networks: Activation Functions." https://developers.google.com/machine-learning/crash-course/neural-networks/activation-functions

GeeksforGeeks. "Tanh vs. Sigmoid vs. ReLU." November 27, 2025. https://www.geeksforgeeks.org/deep-learning/tanh-vs-sigmoid-vs-relu/

EITCA Academy. "What are the key differences between activation functions such as sigmoid, tanh, and ReLU..." May 21, 2024. https://eitca.org/artificial-intelligence/eitc-ai-adl-advanced-deep-learning/neural-networks/neural-networks-foundations/examination-review-neural-networks-foundations/

GeeksforGeeks. "Difference between ANN and BNN." July 2025. https://www.geeksforgeeks.org/machine-learning/difference-between-ann-and-bnn/

Sophos Blog. "Man vs Machine: Comparing Artificial and Biological Neural Networks." 2017. https://www.sophos.com/en-us/blog/man-vs-machine-comparing-artificial-and-biological-neural-networks

Richard Nagyfi. "The differences between Artificial and Biological Neural Networks." Medium, 2018. https://medium.com/data-science/the-differences-between-artificial-and-biological-neural-networks-a8b46db828b7

MIT Technology Review. "The Revolutionary Technique That Quietly Changed Machine Vision Forever." September 9, 2014. https://www.technologyreview.com/2014/09/09/171446/the-revolutionary-technique-that-quietly-changed-machine-vision-forever/

DejanAI. "AlexNet: The Deep Learning Breakthrough That Reshaped Google's AI Strategy." 2025. https://dejan.ai/blog/alexnet-the-deep-learning-breakthrough-that-reshaped-googles-ai-strategy/

Patterson, D. et al. (2022). "The Carbon Footprint of Machine Learning Training Will Plateau, Then Shrink." Google Research. https://arxiv.org/abs/2211.02001